In many cases, what an SEO crawler marks as a fatal error needs immediate attention – but sometimes, it’s not an error at all.

This can happen even with the most popular SEO crawling tools such as Semrush Site Audit, Ahrefs Site Audit, Sitebulb, and Screaming Frog.

How can you tell the difference to avoid prioritizing a fix that doesn’t need to be done?

Here are a few real-life examples of such warnings and errors together, with explanations as to why they may be an issue for your website.

1. Indexability Issues (Noindex Pages on the Site)

Any SEO crawler will highlight and warn you about non-indexable pages on the site. Depending on the crawler type, noindex pages can be marked as warnings, errors, or insights.

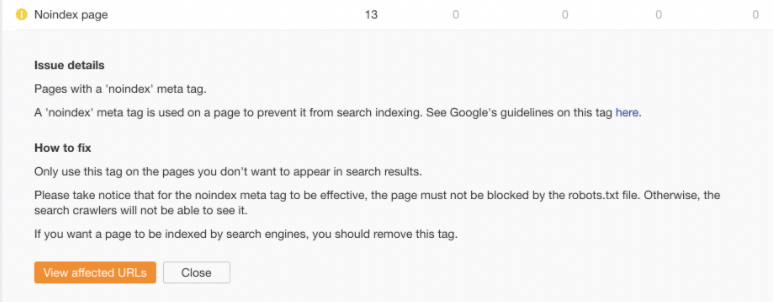

Here’s how this issue is marked in Ahrefs Site Audit:

Screenshot from Ahrefs Site Audit, September 2021

Screenshot from Ahrefs Site Audit, September 2021The Google Search Console Coverage report may also mark non-indexable pages as Errors (if the site has non-indexable pages in the sitemap submitted) or Excluded even though they are not actual issues.

This is, again, only the information that these URLs cannot be indexed.

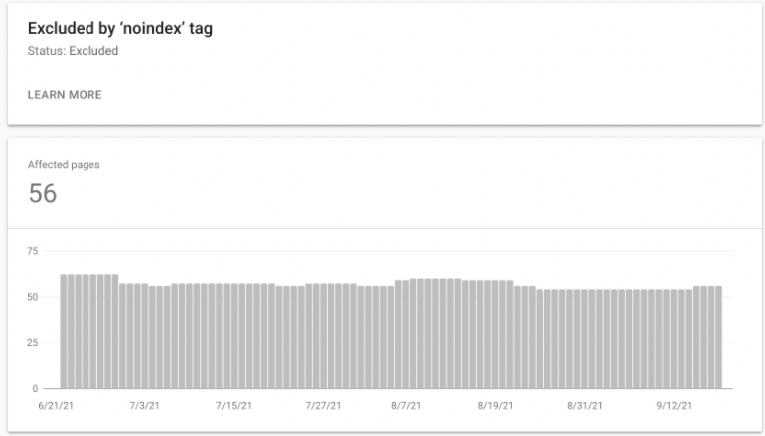

Here is what it looks like in GSC:

Screenshot from Google Search Console, September 2021

Screenshot from Google Search Console, September 2021The fact that a URL has a “noindex” tag on it does not necessarily mean that this is an error. It only means that the page cannot be indexed by Google and other search engines.

The “noindex” tag is one of two possible directives for crawlers, the other one being to index the page.

Practically every website contains URLs that should not be indexed by Google.

These may include, for example, tag pages (and sometimes category pages as well), login pages, password reset pages, or a thank you page.

Your task, as an SEO professional, is to review noindex pages on the site and decide whether they indeed should be blocked from indexing or whether the “noindex” tag could have been added by accident.

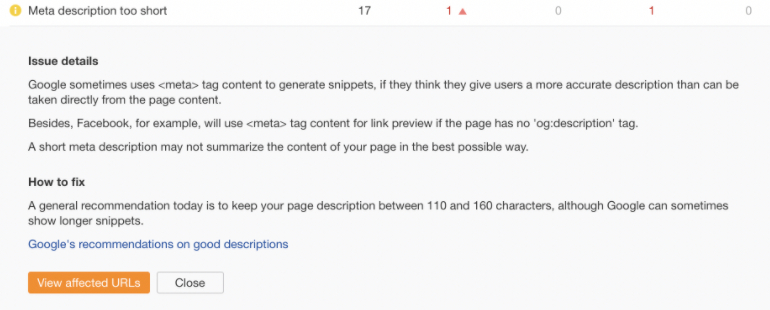

2. Meta Description Too Short or Empty

SEO crawlers will also check the meta elements of the site, including meta description elements. If the site does not have meta descriptions or they are too short (usually below 110 characters), then the crawler will mark it as an issue.

Here’s what that looks like in Ahrefs:

Screenshot from Ahrefs Site Audit, September 2021

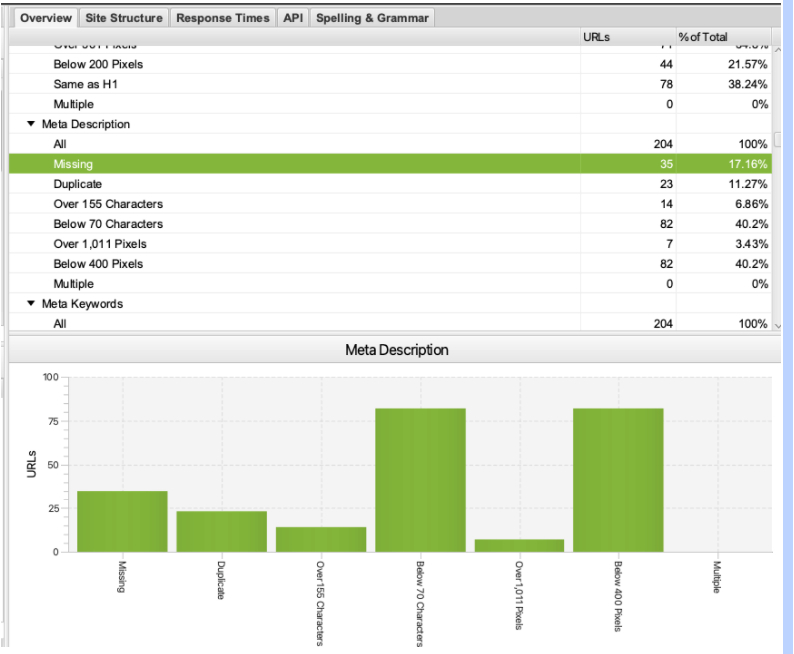

Screenshot from Ahrefs Site Audit, September 2021Here is how Screaming Frog displays it:

Screenshot from Screaming Frog, September 2021

Screenshot from Screaming Frog, September 2021Depending on the size of the site, it is not always possible and/or doable to create unique meta descriptions for all its webpages. You may not need them, either.

A good example of a site where it may not make sense is a huge ecommerce site with millions of URLs.

In fact, the bigger the site is, the less important this element gets.

The content of the meta description element, in contrast to the content of the title tag, is not taken into account by Google and does not influence rankings.

Search snippets sometimes use the meta description but are often rewritten by Google.

Here is what Google has to say about it in their Advanced SEO documentation:

“Snippets are automatically created from page content. Snippets are designed to emphasize and preview the page content that best relates to a user’s specific search: this means that a page might show different snippets for different searches.”

What you as an SEO need to do is keep in mind that each site is different. Use your common SEO sense when deciding whether meta descriptions are indeed an issue for that specific website, or that you can safely ignore the warning.

3. Meta Keywords Missing

Meta keywords were used 20+ years ago as a way to indicate to search engines such as Altavista what key phrases a given URL wanted to rank for.

This was, however, heavily abused. Meta keywords were a sort of a “spam magnet,” so the majority of search engines dropped support for this element.

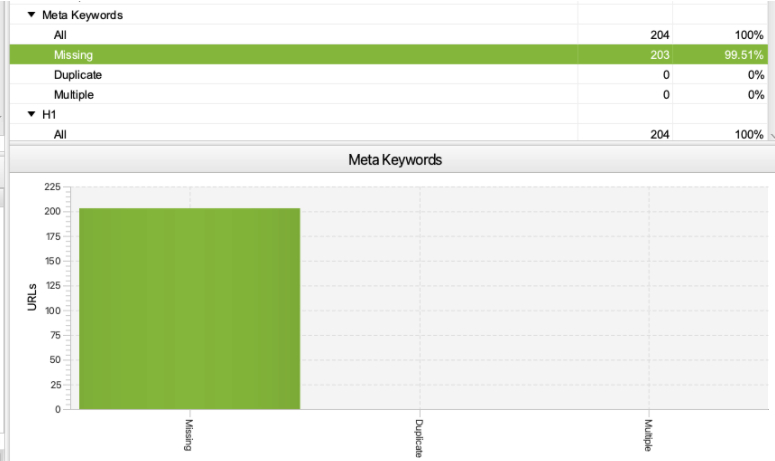

Screaming Frog always checks if there are meta keywords on the site, by default.

Since this is an obsolete SEO element, 99% of sites do not use meta keywords anymore.

Here’s what it looks like in Screaming Frog:

Screenshot from Screaming Frog, September 2021

Screenshot from Screaming Frog, September 2021New SEO pros or clients may get confused thinking that if a crawler marks something as missing, then this element should actually be added to the site. But that’s not the case here!

If meta keywords are missing on the site you are auditing, it’s a waste to recommend adding them.

4. Images Over 100 KB

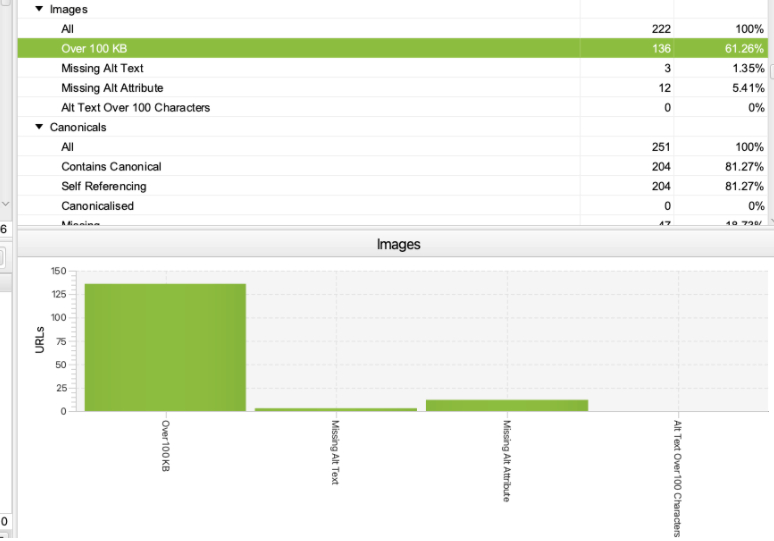

It’s important to optimize and compress images used on the site so that a gigantic PNG logo that weighs 10 MB does not need to be loaded on every webpage.

However, it’s not always possible to compress all images to below 100 KB.

Screaming Frog will always highlight and warn you about images that are over 100 KB. This is what it looks like in the tool:

Screenshot from Screaming Frog, September 2021

Screenshot from Screaming Frog, September 2021The fact that the site has images that are over 100 KB does not necessarily mean that the site has issues with image optimization or is very slow.

When you see this error, make sure to check the overall site’s speed and performance in Google PageSpeed Insights and the Google Search Console Core Web Vitals report.

If the site is doing okay and passes the Core Web Vitals assessment, then usually there is no need to compress the images further.

Tip: What you may do with this Screaming Frog report is sort the images by size from the heaviest to the lightest to check if there are some really huge images on specific webpages.

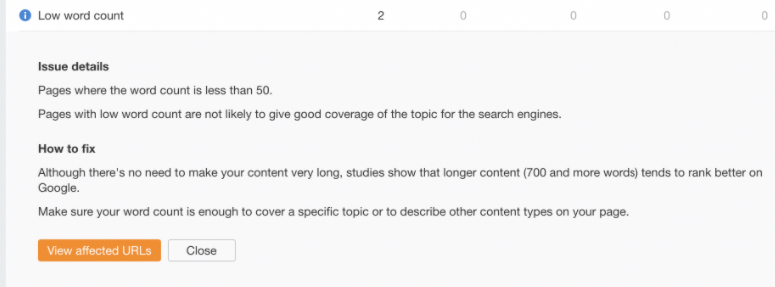

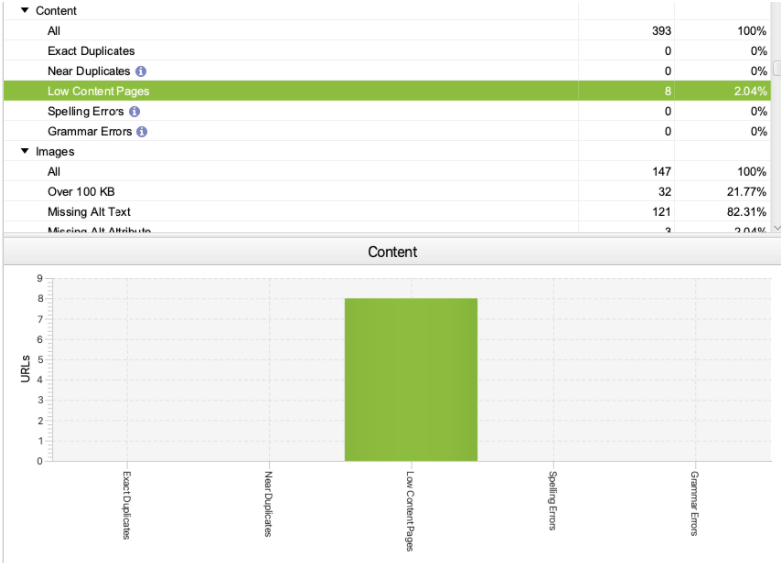

5. Low Content or Low Word Count Pages

Depending on the settings of the SEO crawler, most SEO auditing tools will highlight pages that are below 50-100 words as low content pages.

Here is what this issue looks like in Ahrefs:

Screenshot from Ahrefs Site Audit, September 2021

Screenshot from Ahrefs Site Audit, September 2021Screaming Frog, on the other hand, considers pages below 200 words to be low content pages by default (you can change that setting upon configuring the crawl).

Here is how Screaming Frog reports on that:

Screenshot from Screaming Frog, September 2021

Screenshot from Screaming Frog, September 2021Just because a webpage has few words does not mean that it is an issue or error.

There are many types of pages that are meant to have a low word count, including some login pages, password reset pages, tag pages, or a contact page.

The crawler will mark these pages as low content but this is not an issue that will prevent the site from ranking well in Google.

What the tool is trying to tell you is that if you want a given webpage to rank highly in Google and bring a lot of organic traffic, then this webpage may need to be quite detailed and in-depth.

This often includes, among others, a high word count. But there are different types of search intents and the content depth is not always what users are looking for to satisfy their needs.

When reviewing low word count pages flagged by the crawler, always think about whether these pages are really meant to have a lot of content. In many cases, they are not.

6. Low HTML-Text Ratio

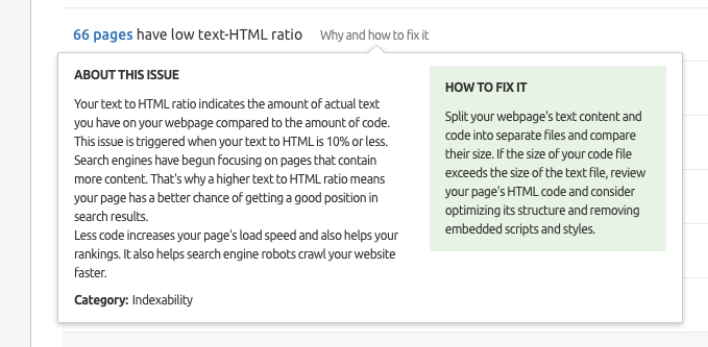

Semrush Site Audit will also alert you about the pages that have a low text-HTML ratio.

This is how Semrush reports on that:

Screenshot from Semrush Site Audit, September 2021

Screenshot from Semrush Site Audit, September 2021This alert is supposed to show you:

- Pages that may have a low word count.

- Pages that are potentially built in a complex way and have a huge HTML code file.

This warning often confuses less experienced or new SEO professionals, and you may need an experienced technical SEO pro to determine whether it’s something to worry about.

There are many variables that can affect the HTML-text ratio and it’s not always an issue if the site has a low/high HTML-text ratio. There is no such thing as an optimal HTML-text ratio.

What you as an SEO pro may focus on instead is ensuring that the site’s speed and performance are optimal.

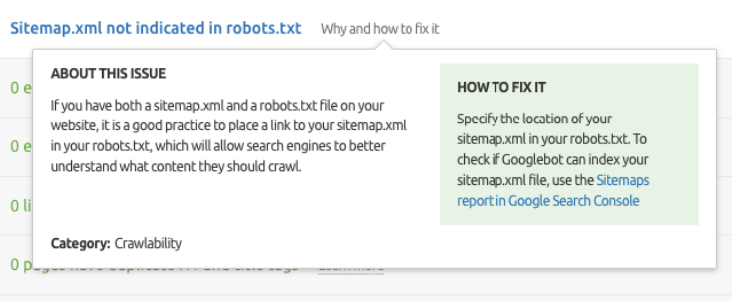

7. XML Sitemap Not Indicated in robots.txt

Robots.txt, in addition to being the file with crawler directives, is also the place where you can specify the URL of the XML sitemap so that Google can crawl it and index the content easily.

SEO crawlers such as Semrush Site Audit will notify you if the XML sitemap is not indicated in robots.txt.

This is how Semrush reports on that:

Screenshot from Semrush Site Audit, September 2021

Screenshot from Semrush Site Audit, September 2021At a glance, this looks like a serious issue even though in most cases it isn’t because:

- Google usually does not have problems crawling and indexing smaller sites (below 10,000 pages).

- Google will not have problems crawling and indexing huge sites if they have a good internal linking structure.

- An XML sitemap does not need to be indicated in robots.txt if it’s correctly submitted in Google Search Console.

- An XML sitemap does not need to be indicated in robots.txt if it’s in the standard location – i.e., /sitemap.xml (in most cases).

Before you mark this as a high-priority issue in your SEO audit, make sure that none of the above is true for the site you are auditing.

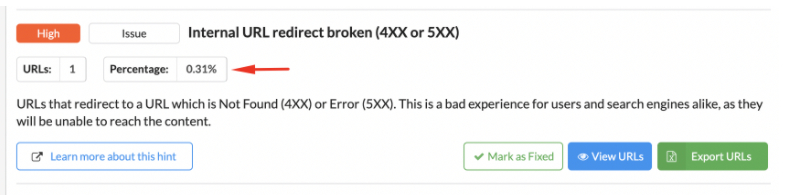

Bonus: The Tool Reports a Critical Error That Relates to Few Unimportant URLs

Even if the tool is showing a real issue, such as a 404 page on the site, it may not be a serious issue if one out of millions of webpages on the site return status 404 or if there are no links pointing to that 404 page.

That’s why, when assessing the issues detected by the crawler, you should always check how many webpages they relate to and which ones.

You need to give the error context.

Sitebulb, for example, will show you the percentage of URLs that a given error relates to.

Here is an example of an internal URL redirecting to a broken URL returning 4XX or 5XX reported by Sitebulb:

Screenshot from Sitebulb Website Crawler, September 2021

Screenshot from Sitebulb Website Crawler, September 2021It looks like a pretty serious issue but it only relates to one unimportant webpage, so it’s definitely not a high-priority issue.

Final Thoughts & Tips

SEO crawlers are indispensable tools for technical SEO professionals. However, what they reveal must always be interpreted within the context of the website and your goals for the business.

It takes time and experience to be able to tell the difference between a pseudo-issue and a real one. Fortunately, most crawlers offer extensive explanations of the errors and warnings they display.

That’s why it’s always a good idea – especially for beginner SEO professionals – to read these explanations and the crawler documentation. Make sure you really understand what a given issue means and whether it’s indeed worth escalating to a fix.

More Resources:

- How Search Engines Crawl & Index: Everything You Need to Know

- Crawl-First SEO: A 12-Step Guide to Follow Before Crawling

- How Search Engines Work

Featured image: Pro Symbols/Shutterstock

![[SEO, PPC & Attribution] Unlocking The Power Of Offline Marketing In A Digital World](https://www.searchenginejournal.com/wp-content/uploads/2025/03/sidebar1x-534.png)