As an SEO professional, it’s your goal – and responsibility – to ensure that you can do what’s necessary to keep things running smoothly and stay up-to-date with your website’s content.

On September 23, I moderated a sponsored Search Engine Journal webinar presented by Steven van Vessum, VP of Community at ContentKing.

He shared how to finally take a proactive stance in your SEO processes as you catch (and resolve!) problems before they impact your rankings.

Here’s a recap of the presentation.

Search engines never sleep. They are continuously crawling your site to update their indexes.

SEO mistakes can happen anytime. You need to fix them before they impact your rankings and bottom line.

About 80% of SEO issues go unnoticed for at least four weeks.

An average SEO issue can cost up to $75,000 in lost revenue.

But with the right tooling and processes, you can mitigate these issues.

But, What About Existing Tools?

Google Search Console and Google Analytics are every SEO professional’s go-to tools of the trade.

But they’re not enough if you want to take a proactive stance in your SEO processes.

While Google Search Console does send notifications, they are delayed and limited.

And when the alerts you’ve set up in Google Analytics are sent, your organic traffic has already taken a hit.

4 Common SEO Issues & How to Prevent Them

Let’s cover the most common SEO issues we come across, and discuss how to prevent them from happening.

1. Client or Colleague Gone Rogue

You don’t really see this one coming.

Quite a few SEO professionals might have experienced any of the following scenarios from a client or colleague:

- “The CMS was telling us to update it, so we did – including the theme and all of its plugins.” (And they did it directly in the live environment.)

- “We tweaked the page titles on these key pages all by ourselves!”

- “These pages didn’t look important, so we deleted them.” (Yes, those were the money pages.)

- “We didn’t like the URLs on these pages, so we changed them.”

These scenarios are frustrating and can lead to a decline in your traffic and rankings.

How to Prevent It

Take these steps to prevent rogue clients or colleagues from damaging your website’s SEO unintentionally.

- Track changes: You need to know what’s going on with the site.

- Get alerted: When someone goes rogue.

- Limit access: A content marketer doesn’t need access to functionality to update a CMS.

- Set clear rules of engagement: Everyone needs to know what they can, and can’t do.

A tool that has a change tracking feature like ContentKing comes in handy in these types of situations.

The platform tracks what pages were added, changed, redirected, and removed. You essentially have a full change log of your entire site.

You also want to get alerts – but you only need them for issues and changes that matter.

Alerts need to be smart.

You don’t need to receive an alert if the page title changes on some of the least important pages, but you want an alert when changes are made to your homepage.

2. Development Team Gone Rogue

This happens when there is no proper coordination between developers and SEO team.

In one example, the development team of an ecommerce store didn’t include SEO specialists in selecting and testing a new pagination system.

They went with one that heavily relied on JavaScript, which caused big delays in the crawling and indexing processes because all of the paginated pages had to be rendered.

Because of this, it was much harder for search engines to discover and value new product pages – not to mention re-evaluating the value of existing product pages.

Another example is when changes to the U.S. section of a site were approved, but they were hastily shipped across all language versions.

It’s so easy to mess up when you’re dealing with localized sites.

How to Prevent It

Similar to the first issues, you also need to:

- Track all changes.

- Get alerted when someone goes rogue.

- Set clear rules of engagement.

- More importantly, do proper QA testing.

3. Releases Gone Bad

Let’s start with a classic: when doing a release, the staging robots.txt accidentally moved over, which prevents crawlers from accessing.

Related to this, we often see the same thing happen with the meta robots no index, or the more exotic noindex through X-Robots-Tag (HTTP Header), which is a lot harder to spot.

You need to monitor your robots.txt. It can make or break your SEO performance.

One character makes all the difference.

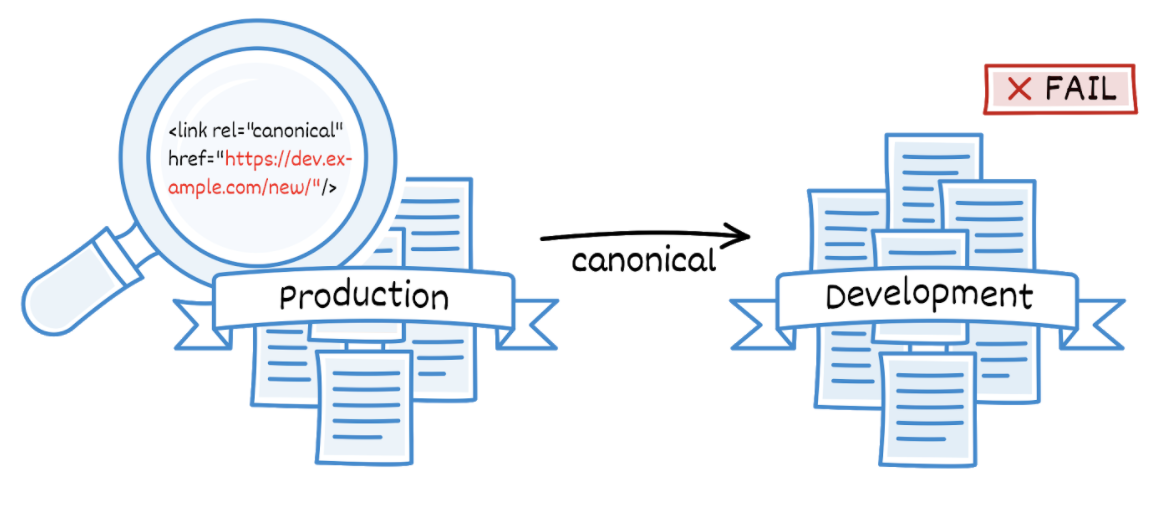

In the example above, a new site section was released and it contained hard-coded canonicals to the development environment.

The development environment was blocked using HTTP authentication, so it wasn’t accessible to search engines.

The digital marketing manager kept wondering: “When is this new section finally going to start ranking?”

Issues like these are especially tricky when canonicals are implemented in the HTTP headers. They’re hard to manually spot.

How to Prevent It

You can avoid this issue by implementing automated quality assurance testing during pre-release, release, and post-release.

It’s not just about having a monitoring system like ContentKing, you also need to have the right processes in place.

For instance, if a release goes horribly wrong you need to be able to quickly revert it.

Tracking all changes and getting alerted when something goes wrong will also help.

4. Buggy CMS Plug-Ins

Buggy CMS plugins can be difficult to handle.

Security updates are often forcibly applied. When they contain bugs, these are introduced without your knowledge.

Over the years, there have been a few examples where buggy CMS plugins changed SEO configurations of hundreds of thousands of sites in one update.

Almost anyone thought it wouldn’t happen, and was caught by surprise.

How to Prevent It

Disabling automatic updates will keep this problem at bay.

Likewise, you also want to track your changes and get alerts when something goes wrong.

Traditional Crawling vs. Continuous Monitoring

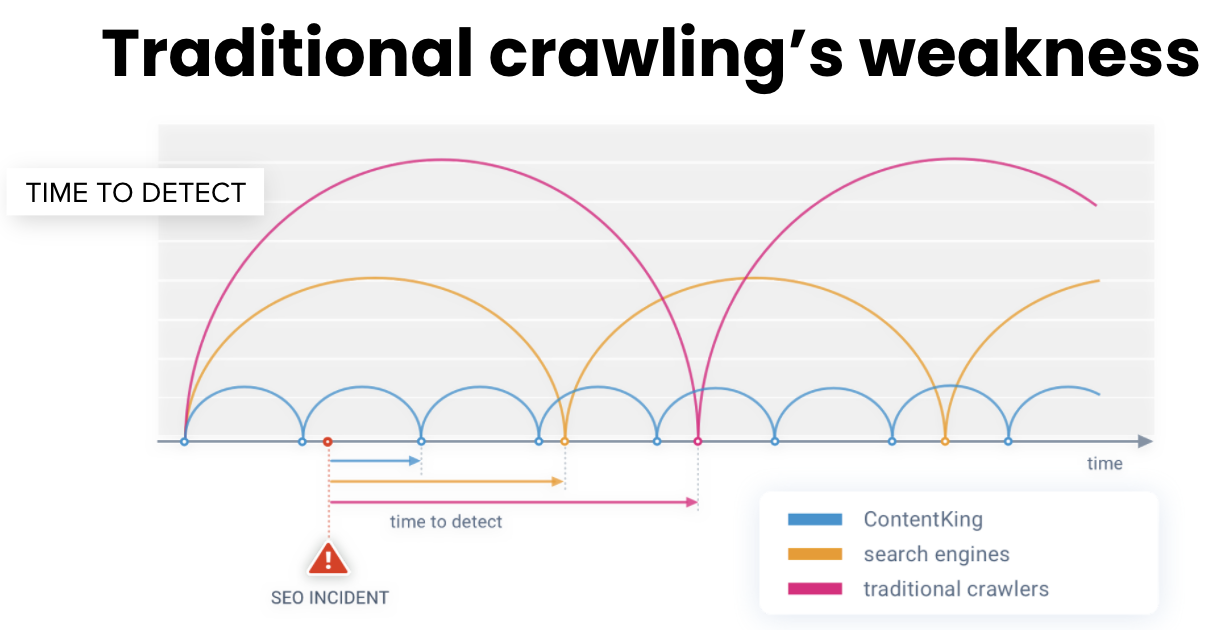

You’re probably wondering how traditional crawling measures up against continuous monitoring.

Let’s look at an example.

Say you have your weekly scheduled crawls every Monday. But what if something goes wrong on Tuesday?

Then you won’t know about it until the next Monday.

By then, search engines will have picked up on it.

With ContentKing you would have already had the fix in place.

Another example is when you’re crawling a large site which takes 2-3 days to finish.

By the time it’s done, you’ll be looking at old data.

In the meantime, a lot has changed already – even your robots.txt and XML sitemaps may have already changed.

Monitoring & Proactive Alerting

The question isn’t if something will go wrong.

The question is when.

When something breaks, you need to know immediately and fix it before Google notices it.

Using monitoring and proactive alerting tools will allow you to take a proactive stance in your SEO processes.

Q&A

Here are just some of the attendee questions answered by Steven van Vessum.

Q: How to track changes?

Steven van Vessum (SV): The best way to keep track of changes on your site is to monitor it with a platform like ContentKing.

Because our platform is monitoring 24/7, you’ll see all changes roll in real-time. There’s no need to do anything on your end!

If you want to go all out, you can set up custom element extraction. With this, you can track changes for anything on the page.

Some examples: whether a product is out of stock, review scores and counts, pricing, or anything else.

Q: What data sources are ContentKing’s alerts based on?

SV: In order to answer this, let me explain how ContentKing works in a nutshell.

ContentKing monitors sites 24/7 – and upon every page request, we check whether anything changed since our last request.

If no changes were found, ContentKing won’t send any alerts.

If we did detect changes, we’ll evaluate the impact of the changes and whether the change leads to an issue.

When evaluating the impact of the changes, and – among other factors – we’ll take into account:

- How many pages are affected.

- How important these pages are.

- How impactful the changes are from an SEO point of view.

On top of that, you can set up alerts on data from external data sources such as Google Analytics and Google Search Console.

We automatically pre-configure alerts so they’re set up correctly, but if you want to customize them to set sensitivity thresholds, alerts scope and routing, you can go all out.

Q: Is there an accurate and reliable way to identify plugin conflicts?

SV: As far as I know, there are none. We’re dealing with many different website platforms, and each platform works differently.

Some WordPress plugins for instance detect whether there are any potentially conflicting plugins running too, but other than that it’s a matter of keeping track of everything that happens on your site.

When you encounter strange behavior, you can investigate and go in and fix it.

The magic words here are “keeping a watchful eye” – without this, you won’t know whether anything changes on your site and you won’t be able to take decisive action.

A great approach to identifying plugin conflicts is to have a staging or acceptance environment running where you first roll out any changes, prior to releasing them to production.

That way you can spot any conflicts before they make it onto your production environment.

Q: Why do we need to disallow crawlers while correcting/checking production failures?

SV: You shouldn’t – you should always avoid disallowing search engine crawlers on your production environment.

And you shouldn’t be making changes directly to your production environment in the first place.

Use a staging or acceptance environment where you validate your changes, make sure you didn’t change more than you were planning on, and then you release your changes to the production environment.

You should however make sure that search engine crawlers can’t access your staging or acceptance environment. We explain the best way to do this in detail in this guide.

[Slides] How to Catch & Fix SEO Issues Before It’s Too Late

Check out the SlideShare below.

Join Us For Our Next Webinar!

Beyond ROAS: Aligning Google Ads With Your True Business Objectives

Join Justin Covington, Director of Paid Channels Solutions at iQuanti, as he breaks down the Google Ads changes and show you how to use value-based bidding to drive measurable results.

More Resources:

- How Often Should You Perform Technical Website Crawls for SEO?

- 5 of the Most Complex SEO Problems & How to Fix Them

- How to Overcome Technical SEO Issues on a Budget

Image Credits

All screenshots taken by author, September 2020