If there’s one thing we SEO pros are good at, it’s making things complicated.

That’s not necessarily a criticism.

Search engine algorithms, website coding and navigation, choosing and evaluating KPIs, setting content strategy, and more are highly complex tasks involving lots of specialized knowledge.

But as important as those things all are, at the end of the day, there is really just a small set of things that will make most of the difference in your SEO success.

In SEO, there are really just three things – three pillars – that are foundational to achieving your SEO goals.

- Authority.

- Relevance.

- Experience (of the users and bots visiting the site).

Nutritionists tell us our bodies need protein, carbohydrates, and fats in the right proportions to stay healthy. Neglect any of the three, and your body will soon fall into disrepair.

Similarly, a healthy SEO program involves a balanced application of authority, relevance, and experience.

Authority: Do You Matter?

In SEO, authority refers to the importance or weight given to a page relative to other pages that are potential results for a given search query.

Modern search engines such as Google use many factors (or signals) when evaluating the authority of a webpage.

Why does Google care about assessing the authority of a page?

For most queries, there are thousands or even millions of pages available that could be ranked.

Google wants to prioritize the ones that are most likely to satisfy the user with accurate, reliable information that fully answers the intent of the query.

Google cares about serving users the most authoritative pages for their queries because users that are satisfied by the pages they click through to from Google are more likely to use Google again, and thus get more exposure to Google’s ads, the primary source of its revenue.

Authority Came First

Assessing the authority of webpages was the first fundamental problem search engines had to solve.

Some of the earliest search engines relied on human evaluators, but as the World Wide Web exploded, that quickly became impossible to scale.

Google overtook all its rivals because its creators, Larry Page and Sergey Brin, developed the idea of PageRank, using links from other pages on the web as weighed citations to assess the authoritativeness of a page.

Page and Brin realized that links were an already-existing system of constantly evolving polling, in which other authoritative sites “voted” for pages they saw as reliable and relevant to their users.

Search engines use links much like we might treat scholarly citations; the more scholarly papers relevant to a source document that cite it, the better.

The relative authority and trustworthiness of each of the citing sources come into play as well.

So, of our three fundamental categories, authority came first because it was the easiest to crack, given the ubiquity of hyperlinks on the web.

The other two, relevance and user experience, would be tackled later, as machine learning/AI-driven algorithms developed.

Links Still Primary For Authority

The big innovation that made Google the dominant search engine in a short period was that it used an analysis of links on the web as a ranking factor.

This started with a paper by Larry Page and Sergey Brin called The Anatomy of a Large-Scale Hypertextual Web Search Engine.

The essential insight behind this paper was that the web is built on the notion of documents inter-connected with each other via links.

Since putting a link on your site to a third-party site might cause a user to leave your site, there was little incentive for a publisher to link to another site unless it was really good and of great value to their site’s users.

In other words, linking to a third-party site acts a bit like a “vote” for it, and each vote could be considered an endorsement, endorsing the page the link points to as one of the best resources on the web for a given topic.

Then, in principle, the more votes you get, the better and the more authoritative a search engine would consider you to be, and you should, therefore, rank higher.

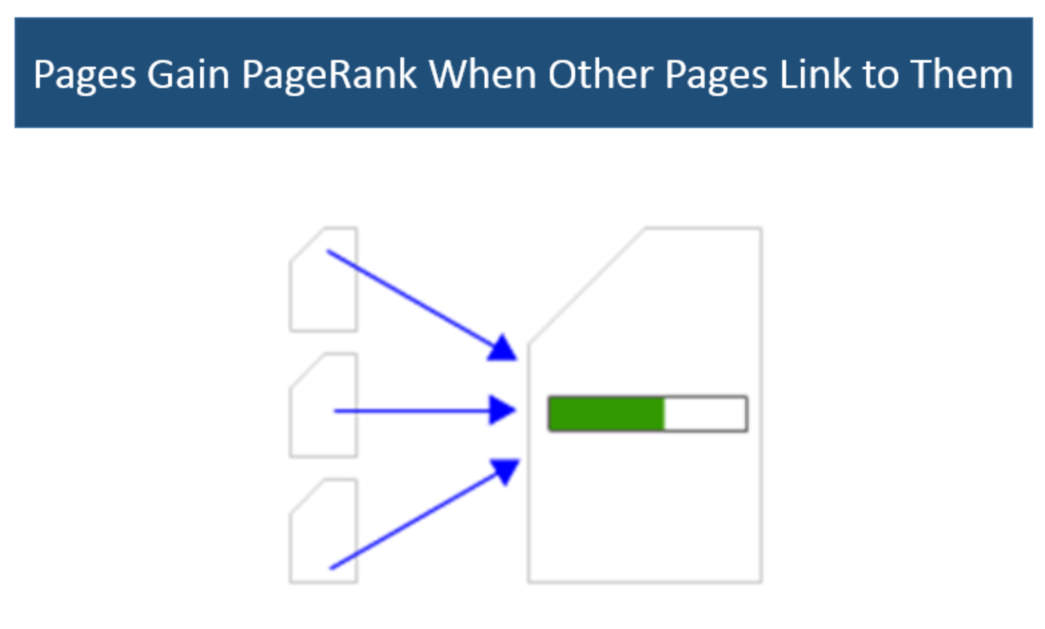

Passing PageRank

A significant piece of the initial Google algorithm was based on the concept of PageRank, a system for evaluating which pages are the most important based on scoring the links they receive.

So, a page that has large quantities of valuable links pointing to it will have a higher PageRank and will, in principle, be likely to rank higher in the search results than other pages without as high a PageRank score.

When a page links to another page, it passes a portion of its PageRank to the page it links to.

Thus, pages accumulate more PageRank based on the number and quality of links they receive.

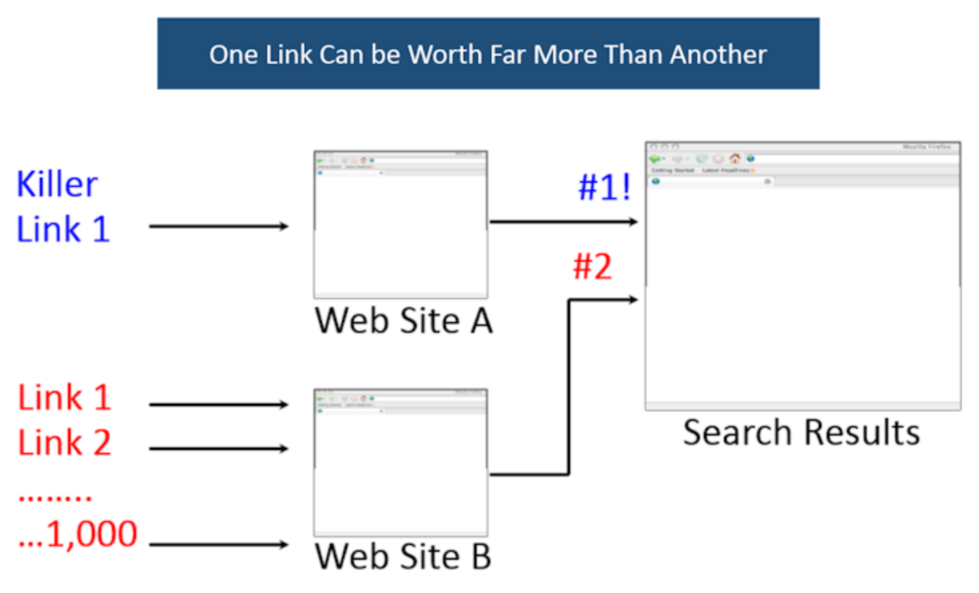

Not All Links Are Created Equal

So, more votes are better, right?

Well, that’s true in theory, but it’s a lot more complicated than that.

PageRank scores range from a base value of one to values that likely exceed trillions.

Higher PageRank pages can have a lot more PageRank to pass than lower PageRank pages. In fact, a link from one page can easily be worth more than one million times a link from another page.

But the PageRank of the source page of a link is not the only factor in play.

Google also looks at the topic of the linking page and the anchor text of the link, but those have to do with relevance and will be referenced in the next section.

It’s important to note that Google’s algorithms have evolved a long way from the original PageRank thesis.

The way that links are evaluated has changed in significant ways – some of which we know, and some of which we don’t.

What About Trust?

You may hear many people talk about the role of trust in search rankings and in evaluating link quality.

For the record, Google says it doesn’t have a concept of trust it applies to links (or ranking), so you should take those discussions with many grains of salt.

These discussions began because of a Yahoo patent on the concept of TrustRank.

The idea was that if you started with a seed set of hand-picked, highly trusted sites and then counted the number of clicks it took you to go from those sites to yours, the fewer clicks, the more trusted your site was.

Google has long said it doesn’t use this type of metric.

However, in 2013 Google was granted a patent related to evaluating the trustworthiness of links. We should not though that the existence of a granted patent does not mean it’s used in practice.

For your own purposes, however, if you want to assess a site’s trustworthiness as a link source, using the concept of trusted links is not a bad idea.

If they do any of the following, then it probably isn’t a good source for a link:

- Sell links to others.

- Have less than great content.

- Otherwise, don’t appear reputable.

Google may not be calculating trust the way you do in your analysis, but chances are good that some other aspect of its system will devalue that link anyway.

Fundamentals Of Earning & Attracting Links

Now that you know that obtaining links to your site is critical to SEO success, it’s time to start putting together a plan to get some.

The key to success is understanding that Google wants this entire process to be holistic.

Google actively discourages, and in some cases punishes, schemes to get links in an artificial way. This means certain practices are seen as bad, such as:

- Buying links for SEO purposes.

- Going to forums and blogs and adding comments with links back to your site.

- Hacking people’s sites and injecting links into their content.

- Distributing poor-quality infographics or widgets that include links back to your pages.

- Offering discount codes or affiliate programs as a way to get links.

- And many other schemes where the resulting links are artificial in nature.

What Google really wants is for you to make a fantastic website and promote it effectively, with the result that you earn or attract links.

So, how do you do that?

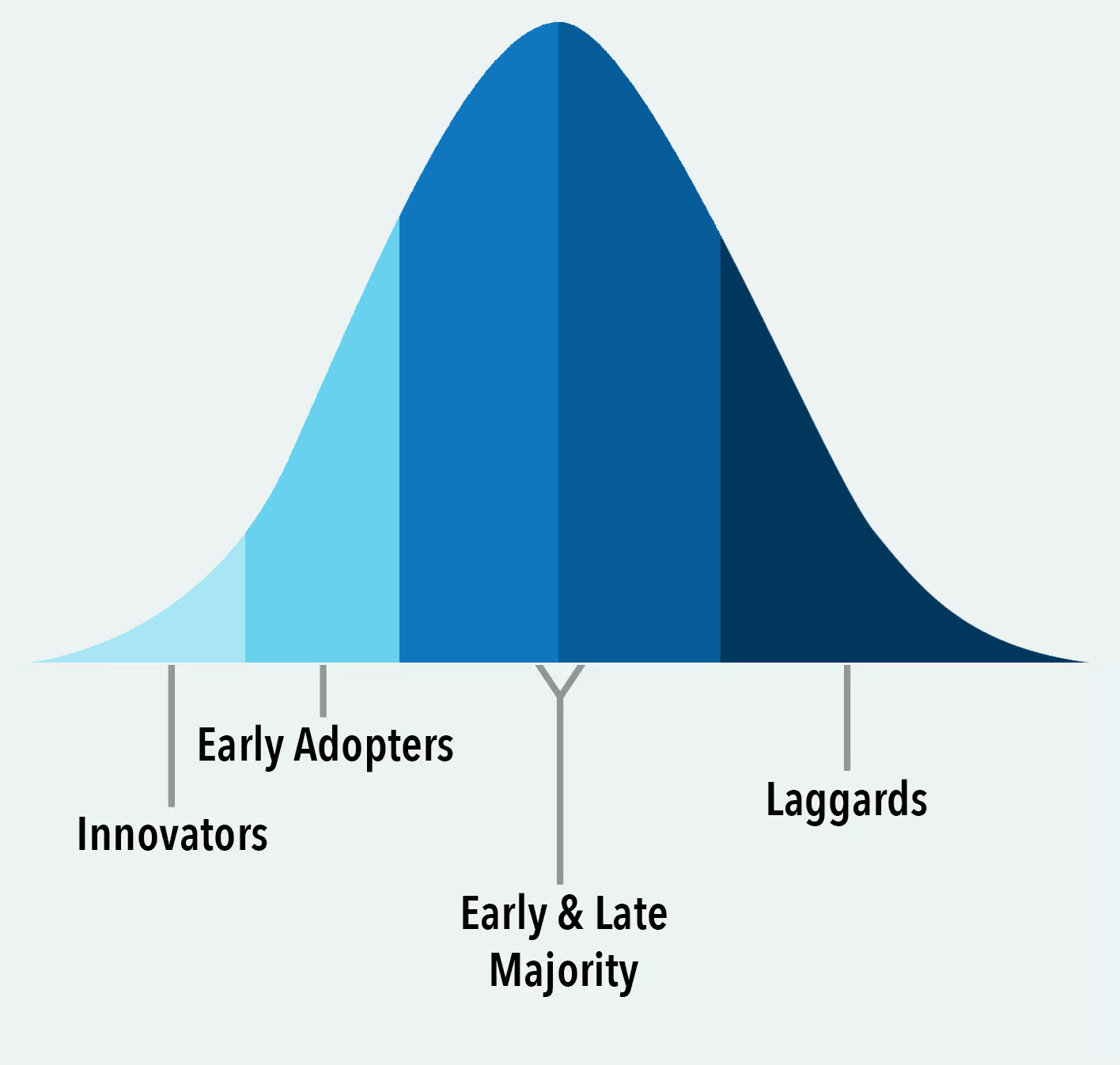

Who Links?

The first key insight is understanding who it is that might link to the content you create.

Here is a chart that profiles the major groups of people in any given market space (based on research by the University of Oklahoma):

Who do you think are the people that might implement links?

It’s certainly not the laggards, and it’s also not the early or late majority.

It’s the innovators and early adopters. These are the people who write on media sites or have blogs and might add links to your site.

There are also other sources of links, such as locally-oriented sites, such as the local chamber of commerce or local newspapers.

You might also find some opportunities with colleges and universities if they have pages that relate to some of the things you’re doing in your market space.

Relevance: Will Users Swipe Right On Your Page?

You have to be relevant to a given topic.

Think of every visit to a page as an encounter on a dating app. Will users “swipe right” (thinking, “this looks like a good match!)?

If you have a page about Tupperware, it doesn’t matter how many links you get – you’ll never rank for queries related to used cars.

This defines a limitation on the power of links as a ranking factor, and it shows how relevance also impacts the value of a link.

Consider a page on a site that is selling a used Ford Mustang. Imagine that it gets a link from Car and Driver magazine. That link is highly relevant.

Also, think of this intuitively. Is it likely that Car and Driver magazine has some expertise related to Ford Mustangs? Of course it does.

In contrast, imagine a link to that Ford Mustang from a site that usually writes about sports. Is the link still helpful?

Probably, but not as helpful because there is less evidence to Google that the sports site has a lot of knowledge about used Ford Mustangs.

In short, the relevance of the linking page and the linking site impacts how valuable a link might be considered.

What are some ways that Google evaluates relevance?

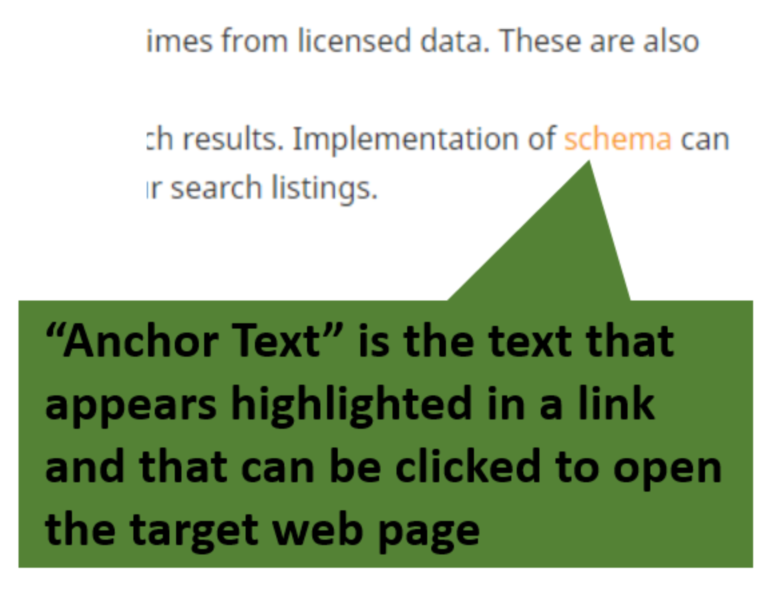

The Role Of Anchor Text

Anchor text is another aspect of links that matters to Google.

The anchor text helps Google confirm what the content on the page receiving the link is about.

For example, if the anchor text is the phrase “iron bathtubs” and the page has content on that topic, the anchor text, plus the link, acts as further confirmation that the page is about that topic.

Thus, the links evaluate both the page’s relevance and authority.

Be careful, though, as you don’t want to go aggressively obtaining links to your page that all use your main keyphrase as the anchor text.

Google also looks for signs that you are manually manipulating links for SEO purposes.

One of the simplest indicators is if your anchor text looks manually manipulated.

Internal Linking

There is growing evidence that Google uses internal linking to evaluate how relevant a site is to a topic.

Properly structured internal links connecting related content are a way of showing Google that you have the topic well-covered, with pages about many different aspects.

By the way, anchor text is as important when creating external links as it is for external, inbound links.

Your overall site structure is related to internal linking.

Think strategically about where your pages fall in your site hierarchy. If it makes sense for users it will probably be useful to search engines.

The Content Itself

Of course, the most important indicator of the relevance of a page has to be the content on that page.

Most SEO professionals know that assessing content’s relevance to a query has become way more sophisticated than merely having the keywords a user is searching for.

Due to advances in natural language processing and machine learning, search engines like Google have vastly increased their competence in being able to assess the content on a page.

What are some things Google likely looks for in determining what queries a page should be relevant for?

- Keywords: While the days of keyword stuffing as an effective SEO tactic are (thankfully) way behind us, having certain words on a page still matters. My company has numerous case studies showing that merely adding key terms that are common among top-ranking pages for a topic is often enough to increase organic traffic to a page.

- Depth: The top-ranking pages for a topic usually cover the topic at the right depth. That is, they have enough content to satisfy searchers’ queries and/or are linked to/from pages that help flesh out the topic.

- Structure: Structural elements like H1, H2, and H3, bolded topic headings, and schema-structured data may help Google better understand a page’s relevance and coverage.

What About E-E-A-T?

E-E-A-T is a Google initialism standing for Experienced-Expertise-Authoritativeness-Trustworthiness.

It is the framework of the Search Quality Rater’s Guidelines, a document used to train Google Search Quality Raters.

Search Quality Raters evaluate pages that rank in search for a given topic using defined E-E-A-T criteria to judge how well each page serves the needs of a search user who visits it as an answer to their query.

Those ratings are accumulated in aggregate and used to help tweak the search algorithms. (They are not used to affect the rankings of any individual site or page.)

Of course, Google encourages all site owners to create content that makes a visitor feel that it is authoritative, trustworthy, and written by someone with expertise or experience appropriate to the topic.

The main thing to keep in mind is that the more YMYL (Your Money or Your Life) your site is, the more attention you should pay to E-E-A-T.

YMYL sites are those whose main content addresses things that might have an effect on people’s well-being or finances.

If your site is YMYL, you should go the extra mile in ensuring the accuracy of your content, and displaying that you have qualified experts writing it.

Building A Content Marketing Plan

Last but certainly not least, create a real plan for your content marketing.

Don’t just suddenly start doing a lot of random stuff.

Take the time to study what your competitors are doing so you can invest your content marketing efforts in a way that’s likely to provide a solid ROI.

One approach to doing that is to pull their backlink profiles using tools that can do that.

With this information, you can see what types of links they’ve been getting and, based on that, figure out what links you need to get to beat them.

Take the time to do this exercise and also to map which links are going to which pages on the competitors’ sites, as well as what each of those pages rank for.

Building out this kind of detailed view will help you scope out your plan of attack and give you some understanding of what keywords you might be able to rank for.

It’s well worth the effort!

In addition, study the competitor’s content plans.

Learn what they are doing and carefully consider what you can do that’s different.

Focus on developing a clear differentiation in your content for topics that are in high demand with your potential customers.

This is another investment of time that will be very well spent.

Experience

As we traced above, Google started by focusing on ranking pages by authority, then found ways to assess relevance.

The third evolution of search was evaluating the site and page experience.

This actually has two separate but related aspects: the technical health of the site and the actual user experience.

We say the two are related because a site that is technically sound is going to create a good experience for both human users and the crawling bots that Google uses to explore, understand a site, and add pages to its index, the first step to qualifying for being ranked in search.

In fact, many SEO pros (and I’m among them) prefer to speak of SEO not as Search Engine Optimization but as Search Experience Optimization.

Let’s talk about the human (user) experience first.

User Experience

Google realized that authoritativeness and relevancy, as important as they are, were not the only things users were looking for when searching.

Users also want a good experience on the pages and sites Google sends them to.

What is a “good user experience”? It includes at least the following:

- The page the searcher lands on is what they would expect to see, given their query. No bait and switch.

- The content on the landing page is highly relevant to the user’s query.

- The content is sufficient to answer the intent of the user’s query but also links to other relevant sources and related topics.

- The page loads quickly, the relevant content is immediately apparent, and page elements settle into place quickly (all aspects of Google’s Core Web Vitals).

In addition, many of the suggestions above about creating better content also apply to user experience.

Technical Health

In SEO, the technical health of a site is how smoothly and efficiently it can be crawled by Google’s search bots.

Broken connections or even things that slow down a bot’s progress can drastically affect the number of pages Google will index and, therefore, the potential traffic your site can qualify for from organic search.

The practice of maintaining a technically healthy site is known as technical SEO.

The many aspects of technical SEO are beyond the scope of this article, but you can find many excellent guides on the topic, including Search Engine Journal’s Advanced Technical SEO.

In summary, Google wants to rank pages that it can easily find, that satisfy the query, and that make it as easy as possible for the searcher to identify and understand what they were searching for.

What About the Google Leak?

You’ve probably heard by now about the leak of Google documents containing thousands of labeled API calls and many thousands of attributes for those data buckets.

Many assume that these documents reveal the secrets of the Google algorithms for search. But is that a warranted assumption?

No doubt, perusing the documents is interesting and reveals many types of data that Google may store or may have stored in the past. But some significant unknowns about the leak should give us pause.

- As Google has pointed out, we lack context around these documents and how they were used internally by Google, and we don’t know how out of date they may be.

- It is a huge leap from “Google may collect and store data point x” to “therefore data point x is a ranking factor.”

- Even if we assume the document does reveal some things that are used in search, we have no indication of how they are used or how much weight they are given.

Given those caveats, it is my opinion that while the leaked documents are interesting from an academic point of view, they should not be relied upon for actually forming an SEO strategy.

Putting It All Together

Search engines want happy users who will come back to them again and again when they have a question or need.

They create and sustain happiness by providing the best possible results that satisfy that question or need.

To keep their users happy, search engines must be able to understand and measure the relative authority of webpages for the topics they cover.

When you create content that is highly useful (or engaging or entertaining) to visitors – and when those visitors find your content reliable enough that they would willingly return to your site or even seek you out above others – you’ve gained authority.

Search engines work hard to continually improve their ability to match the human quest for trustworthy authority.

As we explained above, that same kind of quality content is key to earning the kinds of links that assure the search engines you should rank highly for relevant searches.

That can be either content on your site that others want to link to or content that other quality, relevant sites want to publish, with appropriate links back to your site.

Focusing on these three pillars of SEO – authority, relevance, and experience – will increase the opportunities for your content and make link-earning easier.

You now have everything you need to know for SEO success, so get to work!

More resources:

- What Is Topical Authority & How Does It Work

- SEO Experts: Prepare For Search Generative Experience With “Search Experience Optimization”

- How Search Engines Work

Featured Image: Kapralcev/Shutterstock

![AI Overviews: We Reverse-Engineered Them So You Don't Have To [+ What You Need To Do Next]](https://www.searchenginejournal.com/wp-content/uploads/2025/04/sidebar1x-455.png)